Random Forest: Interview Questions & Answers

Medium-to-Hard Concepts Explained the Way Interviewers Expect

Introduction

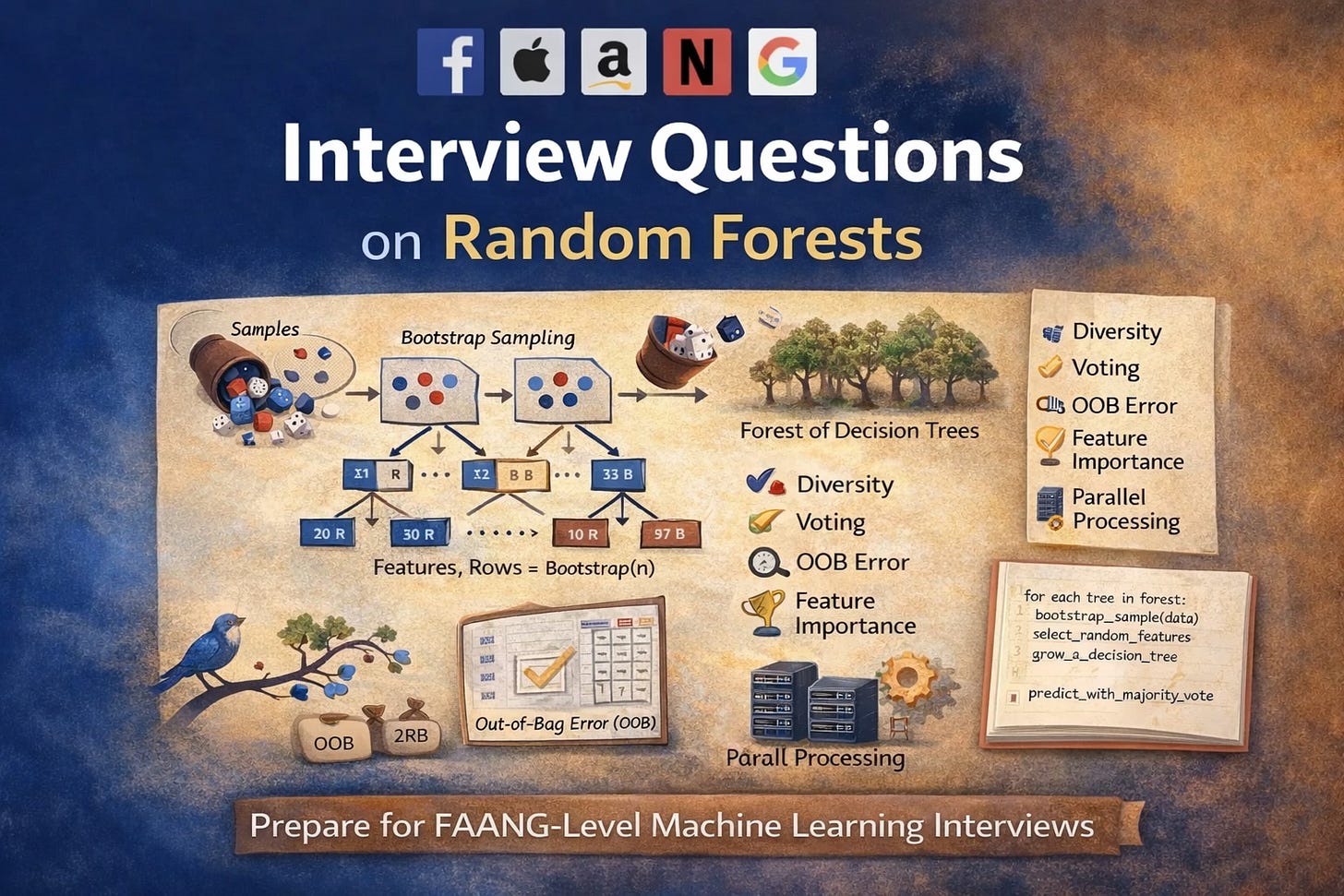

Random Forest is one of those algorithms that looks deceptively simple on the surface but reveals a surprising amount of depth once you dig into it. Because of this, it has become a favorite interview topic at FAANG and other top tech companies not just for checking API knowledge, but for testing how well a candidate understands bias–variance trade-offs, randomness, generalization, and real-world deployment constraints.

In interviews, questions rarely stop at “What is Random Forest?”. Instead, they probe why it works, when it fails, and how its theoretical ideas translate into production systems. You are expected to reason about bootstrapping, feature randomness, correlation between trees, uncertainty estimation, and scaling behavior often with math and intuition side by side.

This post curates and answers medium to hard Random Forest interview questions that have been repeatedly asked in real interviews. Each answer is structured to help you think like an interviewer expects, focusing on clarity, depth, and practical understanding rather than memorization.

Q1: How does Random Forest build its trees, and why does it perform better than a single Decision Tree?

How trees are built

Random Forest trains many decision trees independently using two sources of randomness:

Bootstrap sampling (row randomness)

Each tree is trained on a random sample with replacement from the training data.Feature subsampling (column randomness)

At every split, the tree considers only a random subset of features instead of all features.

Why this works better than a single tree

A single decision tree:

Has low bias

But very high variance (small data changes → very different trees)

Random Forest:

Keeps low bias (trees are still deep)

Dramatically reduces variance by averaging many decorrelated trees

Q2: What is bagging, and how is it different from boosting? How is bagging used in Random Forest?

Bagging (Bootstrap Aggregating)

Train models in parallel

Each model sees a bootstrap sample

Final prediction = average / majority vote

Goal: reduce variance

Boosting

Train models sequentially

Each new model focuses on previous errors

Examples: AdaBoost, Gradient Boosting

Goal: reduce bias (and variance)

In Random Forest

Bagging provides data diversity

Feature subsampling provides model diversity

Together they decorrelate trees

Q3: What is Out-of-Bag (OOB) error? How is it computed and why is it useful?

Because of bootstrapping:

Each tree sees ~63.2% of unique samples

Remaining ~36.8% are Out-of-Bag for that tree

How OOB error is computed

For each training sample:

Collect predictions only from trees where the sample was OOB

Aggregate predictions

Compare with true label

Why it’s useful

Acts like free cross-validation

No separate validation set needed

Very close to test error in practice

Q4: What are the key hyperparameters of Random Forest and how do they affect the model?

Some of the key hyperparamters are:

n_estimators

max_depth

min_samples_leaf

max_features

bootstrap

How do they affect?

Deeper trees → low bias, high variance

Fewer features per split → lower correlation

Larger leaf size → regularization

Common defaults (classification):

max_features

= sqrt(d)Deep trees + many estimators

Q5: How does Random Forest handle missing values?

Standard Random Forest implementations (e.g., scikit-learn) do NOT natively handle missing values. You must handle them explicitly.

1. Pre-imputation (most common)

Mean/median (numerical)

Mode (categorical)

Model-based imputation

2. Indicator variables

Add a binary feature: Lets trees learn “missingness” itself as a signal.

3. Surrogate splits (theoretical)

Used in CART

If primary split feature is missing, use correlated feature

Not widely implemented in RF libraries

Q6: How is feature importance computed in Random Forest?

Random Forest provides two widely used notions of feature importance, each answering a slightly different question.

Impurity-Based (Gini) Importance

During training, every split reduces node impurity (Gini or entropy).

For each feature, Random Forest sums this impurity reduction across all trees:

This measures how frequently and how effectively a feature is used.

Advantages

Extremely fast

Available immediately after training

Limitations

Biased toward high-cardinality features

Inflates importance of correlated features

Permutation Importance

Permutation importance answers a stronger question:

How much does the model actually rely on this feature?

The process is simple:

Measure baseline model performance

Randomly shuffle one feature

Measure performance drop

\(\text{Importance}(f) = \text{Perf}_{\text{original}} - \text{Perf}_{\text{shuffled}} \)

Advantages

Model-agnostic

Reflects true predictive dependency

Limitations

Computationally expensive

Still unstable with correlated features

Q7. Random Forest achieves perfect training accuracy but poor validation accuracy. What went wrong?

This situation indicates overfitting, driven primarily by high variance. Although Random Forest reduces variance compared to a single decision tree, it does not eliminate it.

Common causes

Trees are too deep (max_depth too large)

Leaves are too small (min_samples_leaf too low)

Dataset is small or noisy

Feature leakage from target into inputs

Too few trees to average out noise

How to fix it

Increase min_samples_leaf

Limit max_depth

Increase n_estimators

Monitor Out-of-Bag error

Use permutation importance to detect leakage

Key insight:

Random Forest controls variance through averaging but if each tree memorizes noise, the ensemble still overfits.

8. How does Random Forest handle categorical variables? What preprocessing is required?

In theory, decision trees can split directly on categorical features. In practice, most Random Forest implementations expect numeric inputs.

Common encoding strategies

One-Hot Encoding

Safe and robust

Increases dimensionality

Random Forest handles sparsity well

Ordinal Encoding

Risky when no true order exists

Can introduce artificial hierarchy

Target / Mean Encoding

Powerful for high-cardinality features

Must be cross-validated to avoid leakage

Q9. Why does a bootstrap sample contain ~63.2% of unique data points?

For a dataset of size N:

Probability a sample is not selected in one draw:

\(1-1/N\)Probability it is never selected in N draws:

\((1-1/N)^N\)As N→∞:

\(\lim_{N \to \infty} \left(1 - \frac{1}{N}\right)^N = e^{-1} \approx 0.368 \)

So:

36.8% of samples are Out-of-Bag

63.2% appear at least once

Q10. How does node impurity choice (Gini vs Entropy) affect Random Forest performance?

Gini Impurity

Faster to compute

Favors dominant classes

Default in most implementations

Entropy

More sensitive to class balance

Encourages purer splits

Computationally heavier

In practice

For Random Forests:

Difference is usually negligible

Tree randomness dominates behavior

Depth, data quality, and feature randomness matter more

Q11: How can Random Forest measure similarity between observations? How is this useful for unsupervised tasks?

Random Forest can compute a proximity (similarity) matrix between samples, even though it is primarily a supervised algorithm.

How proximity is defined

Two samples are considered similar if they land in the same leaf node of a tree.

Proximity between samples i and j is the fraction of trees in which they share a leaf.

\(\text{Proximity}(i, j) = \frac{1}{T} \sum_{t=1}^{T} \mathbb{1} \big( \ell_t(x_i) = \ell_t(x_j) \big) \)

Proximity helps in

Captures nonlinear similarity

Uses feature interactions learned by trees

No explicit distance metric needed

Applications

Clustering using proximity matrix

Outlier detection (low average proximity)

Visualization via MDS or t-SNE

Q12. How does Random Forest handle correlated features? Does correlation matter?

Correlation matters but less than you might expect.

What happens with correlated features

Correlated features compete for splits

Importance gets shared or diluted

One feature may dominate early splits

Why Random Forest is robust

Feature subsampling ensures correlated features don’t always compete

Different bootstrap samples cause different features to win splits

Averaging across trees stabilizes predictions

What still breaks

Feature importance becomes unreliable

Permutation importance underestimates correlated features

Q13. How can Out-of-Bag predictions be used to estimate uncertainty or confidence intervals?

Random Forest naturally supports uncertainty estimation through its ensemble structure.

Key idea

Each sample receives predictions from a subset of trees (those where it is OOB).

Regression

Use distribution of OOB predictions

Estimate variance or quantiles

Classification

Use vote proportions

Predictive confidence ≈ vote entropy

Why this matters

Confidence-aware predictions

Risk-sensitive decision systems

Model debugging

Q14. What are the trade-offs in parallelizing Random Forest training?

Random Forest is embarrassingly parallel, but trade-offs still exist.

What parallelizes well

Tree construction

Bootstrap sampling

Feature selection

What doesn’t

Memory bandwidth

Aggregation overhead

I/O bottlenecks

Q15. How would you tune and evaluate Random Forest on a highly imbalanced dataset?

Key challenges

Accuracy becomes meaningless

Minority class is under-represented

Default splits favor majority class

Model-level strategies

class_weight

=balancedIncrease min_samples_leaf

Reduce max_depth

Data-level strategies

Stratified sampling

SMOTE or undersampling

Cost-sensitive learning

Evaluation metrics

Precision–Recall AUC

F1 score

Recall at fixed precision

Q16. How would you deploy a Random Forest model for real-time predictions? What ensures low latency and scalability?

Random Forest deployment is often simpler than deep models, but careful system design is still required for real-time use.

Key challenges

Large number of trees

Memory-heavy models

Latency grows linearly with tree count

Best practices

Limit tree depth to reduce inference time

Serialize efficiently (e.g., joblib, ONNX where applicable)

Warm-load models in memory (no disk access at inference)

Batch predictions when possible

Horizontal scaling using stateless services

Production architecture

Feature preprocessing as a shared service

Model served behind REST/gRPC

Cache frequent predictions if feature space is stable

Conclusion

Random Forest interviews are rarely about remembering definitions. They are about demonstrating that you understand why ensembles work, how randomness reduces correlation, and how these ideas affect performance, interpretability, and deployment at scale.

If you can confidently explain concepts like bootstrap sampling, Out-of-Bag error, feature importance bias, proximity measures, and system trade-offs, you are already operating at a strong interview level. These are the signals interviewers look for when assessing whether someone can move beyond toy datasets and build robust models in production.

This post is part of a broader effort to create deep, interview-focused explanations the kind that help you reason under pressure rather than recite answers.

To explore more interview-ready machine learning concepts and deep dives, please follow the link below: Interview Prep